Original Article

Eduweb, 2026, enero-marzo, v.20, n.1. ISSN: 1856-7576

Doi: https://doi.org/10.46502/issn.1856-7576/2026.20.01.12

La IA generativa en la universidad: Análisis comparativo de las percepciones de profesores y estudiantes

F. Javier Miranda

Department of Business Management and Sociology, Faculty of Economics and Business Administration, University of Extremadura, Professor, Badajoz, Spain.

https://orcid.org/0000-0001-6810-1359

Antonio Chamorro-Mera

Department of Business Management and Sociology, Faculty of Economics and Business Administration, University of Extremadura, Associate professor, Badajoz, Spain.

https://orcid.org/0000-0002-6531-919X

Cómo citar:

Miranda, F.J., & Chamorro-Mera, A. (2026). Generative AI in the eyes of the academy: Comparative analysis of faculty and student perceptions. Revista Eduweb, 20(1), 200-216. https://doi.org/10.46502/issn.1856-7576/2026.20.01.12

Recibido: 09/10/25 Aceptado: 24/11/25

Abstract

The integration of Generative Artificial Intelligence tools such as ChatGPT, Gemini, and Claude is transforming higher education, yet little is known about how different academic stakeholders perceive these technologies within the same institutional context. This study presents a comparative analysis of faculty and student perceptions of Gen-AI in a Spanish university, based on two surveys conducted during the 2024–2025 academic year. Using a descriptive-comparative approach and independent samples t-tests, the study identifies statistically significant differences in attitudes toward Gen-AI across dimensions such as trust, ease of use, institutional support, and ethical concerns. Results show that students exhibit greater enthusiasm, confidence, and willingness to adopt Gen-AI tools, while faculty express more caution, particularly regarding reliability and pedagogical alignment. These findings underscore the need for differentiated institutional strategies that address the distinct expectations and challenges faced by both groups. These findings provide actionable insights for designing institutional strategies and training programs that foster ethical and effective integration of AI in teaching and learning.

Keywords: Educational innovation, generative artificial intelligence, higher education, perceptions, technology adoption.

Resumen

La integración de herramientas de inteligencia artificial generativa, como ChatGPT, Gemini y Claude, está transformando la educación superior, pero se sabe poco sobre cómo perciben estas tecnologías los diferentes actores académicos dentro del mismo contexto institucional. Este estudio presenta un análisis comparativo de las percepciones del profesorado y el alumnado sobre la Gen-IA en una universidad española, basado en dos encuestas realizadas durante el curso académico 2024-2025. Mediante un enfoque descriptivo-comparativo y pruebas t para muestras independientes, el estudio identifica diferencias estadísticamente significativas en las actitudes hacia la Gen-AI en dimensiones como la confianza, la facilidad de uso, el apoyo institucional y las preocupaciones éticas. Los resultados muestran que los estudiantes muestran un mayor entusiasmo, confianza y disposición a adoptar estas herramientas, mientras que el profesorado expresa más cautela, especialmente en lo que respecta a la fiabilidad y la alineación pedagógica. Estos hallazgos subrayan la necesidad de estrategias institucionales diferenciadas que aborden las distintas expectativas y retos a los que se enfrentan ambos grupos. Estos hallazgos proporcionan información útil para diseñar estrategias institucionales y programas de formación que fomenten la integración ética y eficaz de la IA en la enseñanza y el aprendizaje.

Palabras clave: Adopción de tecnología, educación superior, innovación educativa, inteligencia artificial generativa, percepciones.

Introduction

The integration of Generative Artificial Intelligence (Gen-AI) into higher education is rapidly reshaping the academic landscape. Tools such as ChatGPT, Gemini, and Claude are no longer emerging novelties but are becoming embedded in the daily practices of both students and faculty. Their growing presence has sparked a wave of research aimed at understanding the psychological, pedagogical, and ethical dimensions of Gen-AI adoption in university settings.

To date, most empirical studies have focused on either students or faculty in isolation. On one hand, research on university teachers has highlighted the role of perceived usefulness, attitude, and personal innovativeness in shaping their willingness to incorporate Gen-AI into teaching practices (Miranda & Chamorro-Mera, 2025a; Gutiérrez-Leefmans, 2025; Bhaskar et al., 2024; Lu et al., 2024). On the other hand, studies on students have emphasized the influence of perceived risk, social norms, and attitudes toward AI on their intention to use these tools for academic purposes (Yu & Guo, 2023; Abbas et al., 2025).

However, there is a notable gap in the literature regarding comparative analyses that examine how these two key academic stakeholders- teachers and students- perceive Gen-AI within the same institutional context. Such a perspective is essential for fostering a shared understanding of the opportunities and challenges posed by these technologies. By identifying convergences and divergences in how both groups evaluate the usefulness, risks, ease of use, and institutional support for Gen-AI, universities can design more coherent policies, targeted training programs, and pedagogical strategies that align with the needs and expectations of their academic communities.

This article addresses that gap by presenting a comparative analysis of two surveys conducted at the University of Extremadura (Spain), one targeting faculty and the other students. Using a set of shared indicators, the study explores statistically significant differences in their perceptions of Gen-AI, offering insights into how these tools are being received across the academic spectrum and what this means for their ethical and effective integration into higher education.

This study contributes to the academic literature by offering a comparative perspective that is rarely addressed: the simultaneous analysis of faculty and student perceptions of generative AI within the same institutional context. Unlike previous research grounded in specific theoretical models, this article adopts a descriptive-comparative approach that allows for a more flexible and context-sensitive exploration of attitudes toward Gen-AI. The article is structured as follows: the next section reviews the relevant literature; this is followed by a detailed explanation of the methodology used; the fourth section presents the results of the comparative analysis; the fifth discusses the implications of the findings; and the final sections offer conclusions, acknowledge limitations, and suggest directions for future research.

Literature Review

The integration of Gen-AI into higher education has sparked a growing body of research exploring its pedagogical, ethical, and technological implications. While early studies focused on the capabilities of large language models (LLMs) such as ChatGPT, more recent work has shifted toward understanding the psychological and contextual factors that shape adoption among different academic stakeholders.

Recent studies have begun to explore not only the drivers of Gen-AI adoption but also its unintended consequences, particularly among students. Abbas et al. (2025) conducted a time-lagged study across six universities in Pakistan, revealing that students with high innovation consciousness are more likely to use ChatGPT, while those with a high need for cognition do not necessarily rely on it. Importantly, their findings suggest that frequent use of Gen-AI tools is positively associated with AI addiction and increased tolerance for academic dishonesty. These results underscore the need for institutions to promote ethical awareness and critical engagement with AI tools, especially in contexts where digital literacy and academic integrity norms vary widely (Abbas et al., 2025)

Complementing this, Batista et al. (2024) conducted a systematic literature review of 37 empirical studies on Gen-AI in higher education, identifying three dominant themes: application of Gen-AI technologies, stakeholder perceptions, and specific use cases. Their review highlights both the versatility of Gen-AI and the challenges it poses, including assessment integrity, institutional readiness, and the need for pedagogical adaptation (Batista et al., 2024). Other systematic reviews highlight both opportunities and risks of Gen-AI adoption in higher education (Salas-Pilco & Yang, 2022; González Torres et al., 2025). Similarly, Jingwei He et al. (2024) conducted a large-scale survey at the Hong Kong University of Science and Technology, revealing that students’ adoption of Gen-AI tools is influenced by perceived usefulness, peer influence, and prior experience with AI technologies. Together, these studies underscore the need for universities to promote ethical awareness, critical engagement, and context-sensitive strategies for Gen-AI integration.

Research on students has emphasized a broader range of psychological and social variables. While perceived usefulness and attitude remain central to adoption (Yilmaz et al., 2023; Soliman et al., 2024; Ríos-Hernández Mateus et al., 2024), students’ decisions are also shaped by perceived risk, subjective norms, and their attitudes toward the future of AI (Abbas et al., 2025; Ivanov et al., 2024).

Students often adopt Gen-AI tools for practical purposes such as summarizing texts, generating ideas, or improving writing (Shahzad et al., 2024; Shevel et al., 2025). However, their use is frequently self-directed, with limited formal training and ethical guidance. This raises concerns about misinformation, plagiarism, and the lack of critical engagement with AI-generated content (Shahzad et al., 2024).

Moreover, students appear to be more sensitive to social influence, particularly from peers and instructors. The perception that Gen-AI use is normalized or encouraged within their academic environment can significantly shape their attitudes and intentions (Hamamra et al., 2024).

Research on Gen-AI adoption in higher education has frequently drawn on established theoretical frameworks such as the Technology Acceptance Model (TAM) and the Theory of Planned Behaviour (TPB). While these models have been applied to both students and faculty, studies focusing on lecturers often highlight attitude and perceived usefulness as the most influential predictors of intention to use Gen-AI in teaching contexts (Miranda & Chamorro-Mera, 2025b; Bhaskar et al., 2024; Lu et al., 2024). Faculty members are more likely to adopt these tools when they perceive them as enhancing productivity, supporting innovation, or facilitating personalized instruction.

Other factors such as perceived ease of use, personal innovativeness, and self-efficacy have shown more modest effects. Interestingly, subjective norms- the perceived expectations of colleagues, students, or institutional leadership- have not consistently emerged as significant predictors in Western academic contexts, suggesting that faculty decisions may be more autonomous and less socially driven (Strzelecki et al., 2024).

Despite the generally positive attitudes toward Gen-AI, concerns persist regarding ethical risks, including academic dishonesty, overreliance on automation, and the erosion of critical thinking. These concerns highlight the need for institutional policies that promote responsible and informed use of AI in teaching.

Despite the growing interest in Gen-AI adoption, few studies have examined how faculty and students perceive these tools within the same institutional context. This gap is particularly relevant given that effective and ethical integration of Gen-AI in higher education requires alignment between teaching practices and learning behaviours.

An exception is the study by Ivanov et al. (2024), which analysed both lecturers and students using the Theory of Planned Behaviour. However, their data came from multiple universities and countries, focusing only on actual users, whereas our study examines users and non-users within a single institutional context.

A comparative approach can reveal whether both groups share similar expectations, concerns, and levels of preparedness- or whether there are significant mismatches that could hinder pedagogical coherence. Understanding these dynamics is essential for designing institutional strategies that are inclusive, context-sensitive, and responsive to the needs of all academic actors.

Unlike previous studies that applied structural models based on constructs such as perceived usefulness, attitude, or subjective norms (e.g., TAM or TPB), the present research adopts a descriptive-comparative approach. Rather than testing theoretical relationships, this study focuses on analysing individual survey items that reflect perceptions, attitudes, and experiences related to the use of Generative AI tools in academic contexts. These items were inspired by validated constructs used in earlier research but are treated here as standalone indicators to explore differences between faculty and student responses. This approach allows for a more flexible and context-sensitive examination of how Gen-AI is perceived across academic roles, without the constraints of a predefined theoretical model.

Methodology

This study adopts a descriptive and comparative approach to explore how university faculty and students perceive the use of Gen-AI tools in academic contexts. The data analysed in this article were collected through two online surveys conducted during the 2024–2025 academic year at the University of Extremadura (Spain). The first survey was completed by 61 faculty members from various academic disciplines within the university. The second survey involved 150 undergraduate and master’s students. Participation in both surveys was voluntary and anonymous.

While the study offers valuable comparative insights, certain methodological limitations regarding sample size and representativeness should be acknowledged. The faculty sample (n=61), although sufficient for conducting basic statistical analyses such as independent samples t-tests, is relatively small compared to the overall teaching staff at the University of Extremadura. This may limit the generalizability of the findings, particularly across underrepresented academic disciplines. Furthermore, participation in both surveys was voluntary and anonymous, introducing the possibility of self-selection bias. It is likely that respondents had a greater interest in or familiarity with generative AI technologies, which could have influenced their responses. As such, the results should be interpreted with caution, recognizing that they reflect the views of a self-selected group rather than a fully representative cross-section of the university community.

The questionnaires included 16 items grouped into five dimensions that reflect key constructs discussed in the literature: (1) Ethical perceptions (e.g., ethical use of Gen-AI, concern for academic integrity), which assess awareness of responsible practices and potential risks; (2) Perceived usefulness (e.g., productivity and quality with Gen-AI, improvement in academic performance, technical problem-solving, adaptation and future readiness), capturing beliefs about the practical benefits of Gen-AI in academic tasks; (3) Ease of use and integration (e.g., ease of use of Gen-AI, ability to integrate Gen-AI), which measure perceived simplicity and confidence in incorporating these tools into workflows; (4) Institutional and social support (e.g., institutional support for Gen-AI, influence of admired individuals), reflecting the role of formal policies and informal norms; and (5) Willingness and attitudes toward adoption (e.g., willingness to use Gen-AI, recommendation of Gen-AI, willingness to learn about Gen-AI, willingness to pay for Gen-AI, confidence in Gen-AI results, accuracy of Gen-AI information), which indicate openness to adoption and trust in outputs.

Each item was measured on a 5-point Likert scale ranging from 1 (“strongly disagree”) to 5 (“strongly agree”). This structure ensures alignment with theoretical constructs such as perceived usefulness, ease of use, and social influence, while allowing for a nuanced comparison between faculty and student perceptions.

The analysis focused on descriptive statistics and independent samples t-tests to identify statistically significant differences between faculty and student responses. The independent samples t-test was used to compare the means of two independent groups (faculty and students) for each survey item, under the assumptions of normal distribution and homogeneity of variances. This test evaluates whether the observed differences in group means are statistically significant or likely due to random variation.

In addition to reporting descriptive statistics and p-values from independent samples t-tests, this study also includes Cohen’s d effect sizes to assess the magnitude of differences between faculty and student responses. Cohen’s d is a widely used metric in educational and psychological research that quantifies the standardized difference between two means, offering insight into the practical significance of the results beyond statistical significance alone.

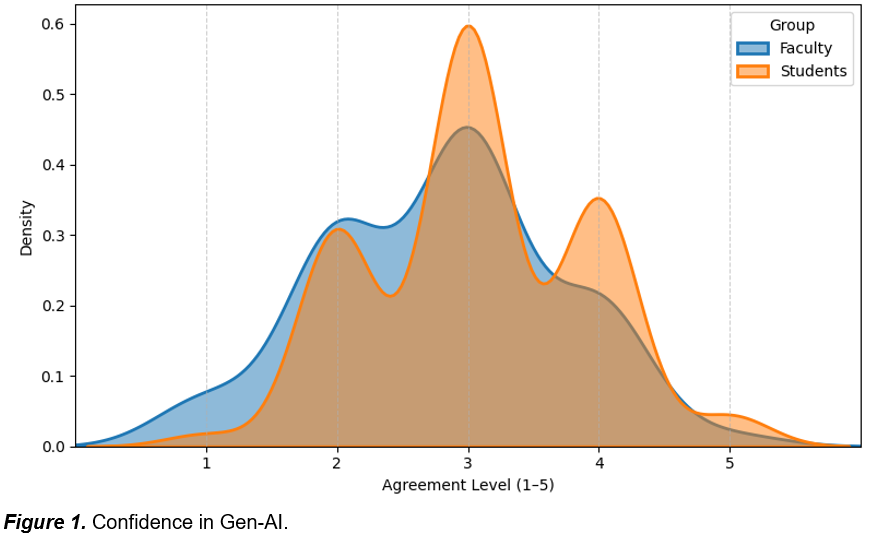

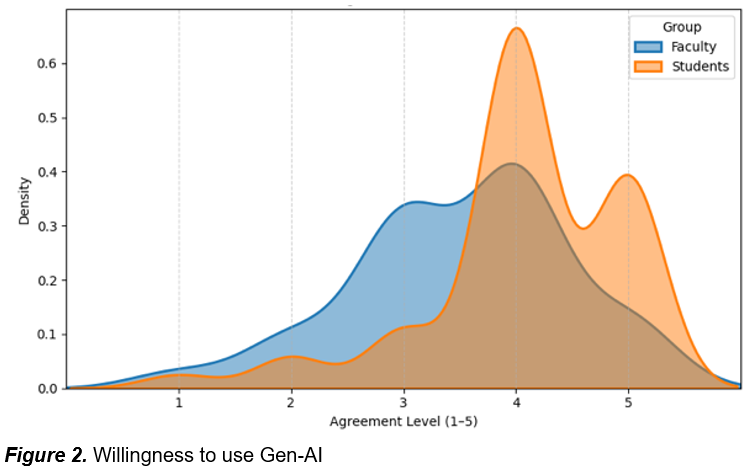

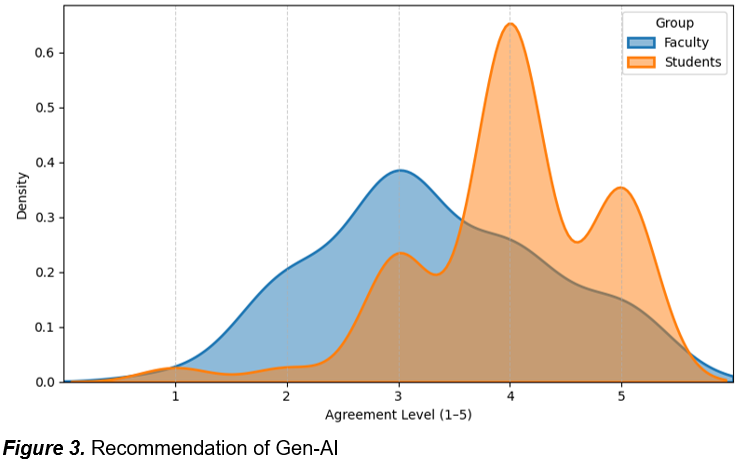

For items showing significant differences (p < 0.05), the results were further illustrated using kernel density estimation (KDE) plots to visualize the distribution of responses across groups. These plots provide a smoothed representation of the response distributions, allowing for a clearer comparison of patterns between faculty and students. This methodological approach enables a nuanced understanding of how Gen-AI is perceived across academic roles, without relying on a predefined theoretical framework.

Results

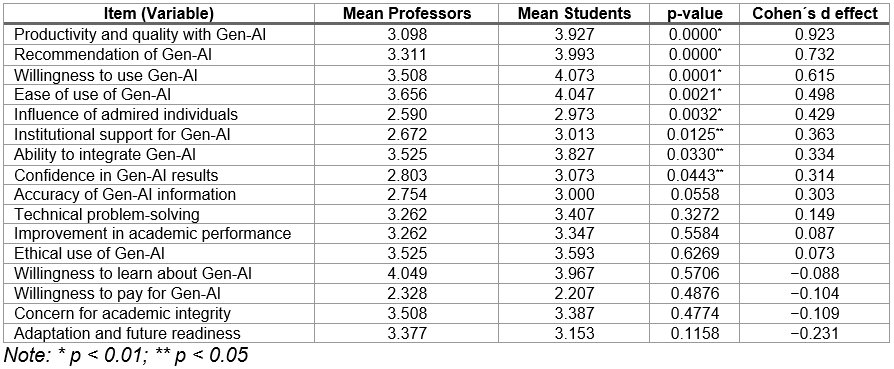

The table 1 presents a summary of the mean scores for faculty and students across various survey items related to their perceptions of Gen-AI. The table includes the results of independent samples t-tests conducted to determine whether the observed differences between the two groups are statistically significant. This comparative overview highlights key areas of divergence and convergence in attitudes toward Gen-AI, serving as a quantitative foundation for the subsequent visual and interpretive analysis.

To complement the statistical significance analysis, table 1 includes Cohen’s d effect sizes for each survey item. While p-values indicate whether differences between faculty and student responses are statistically significant, Cohen’s d provides a standardized measure of the magnitude of those differences. This addition allows for a clearer interpretation of the practical relevance of the observed gaps in perception.

Table 1.

T-test Results: Professors vs. Students on Gen-AI Perceptions

The comparative analysis between faculty and students reveals a consistent pattern of divergence in their perceptions of generative AI, particularly in areas related to trust, usability, and integration into academic practices. Statistically significant differences across several items suggest that students generally exhibit greater enthusiasm and confidence toward these tools.

To begin with, students reported significantly higher confidence in the results generated by Gen-AI tools (p = 0.0443), indicating a stronger belief in their reliability (see figure 1). This difference may stem from more frequent use or a lower awareness of potential limitations.

Although the difference in perceived accuracy did not reach statistical significance (p = 0.0558), the trend also favoured students, reinforcing their more optimistic stance.

This greater trust is further reflected in students’ markedly higher willingness to use Gen-AI (mean = 4.073 vs. 3.508; p = 0.0001) and to recommend it to others (mean = 3.993 vs. 3.311; p < 0.0001). These findings suggest that students not only accept Gen-AI more readily but also act as informal advocates for its adoption within academic settings.

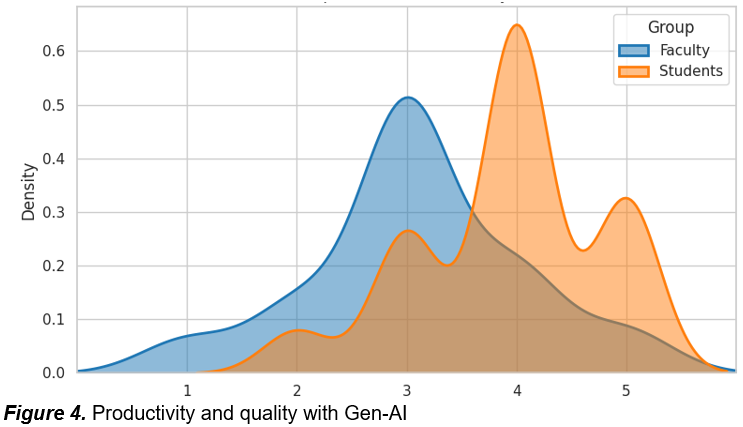

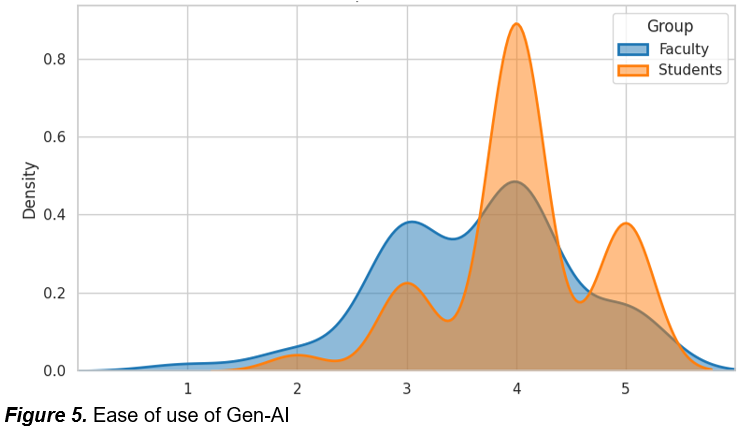

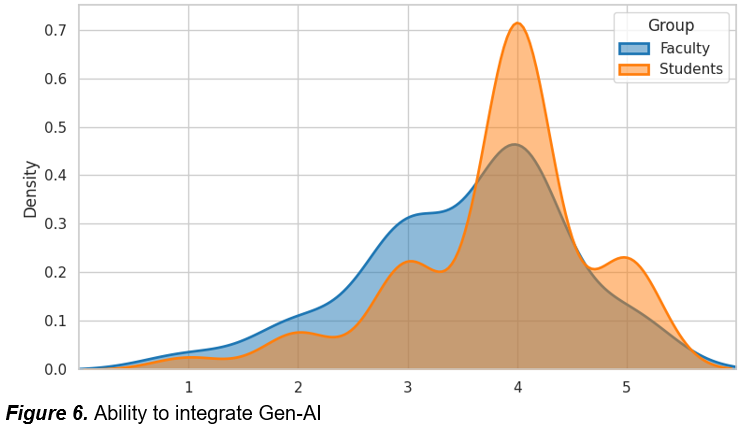

In line with this favourable attitude (see figure 4), students perceive Gen-AI as more beneficial for enhancing productivity and academic quality (mean = 3.927 vs. 3.098; p < 0.0001). This perception is supported by their higher ratings of ease of use (p = 0.0021) and greater confidence in their ability to integrate these tools into academic tasks (p = 0.033) (see figures 5 & 6). Taken together, these results point to a generational or experiential divide in how Gen-AI is evaluated in terms of practical utility.

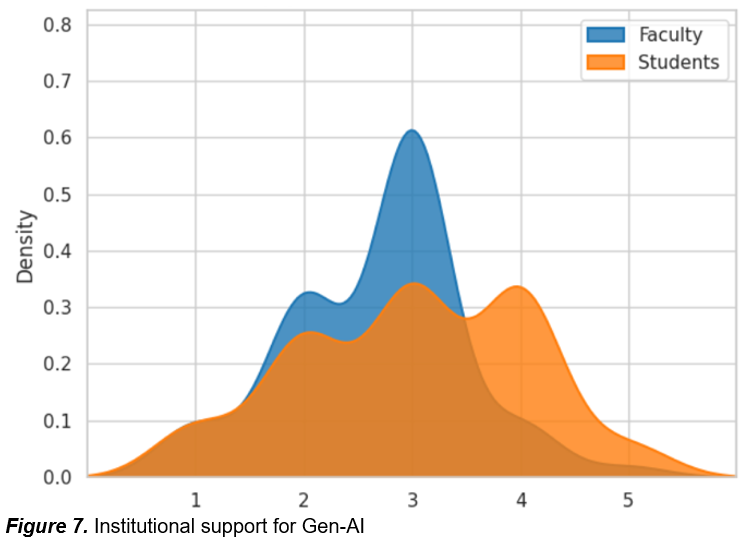

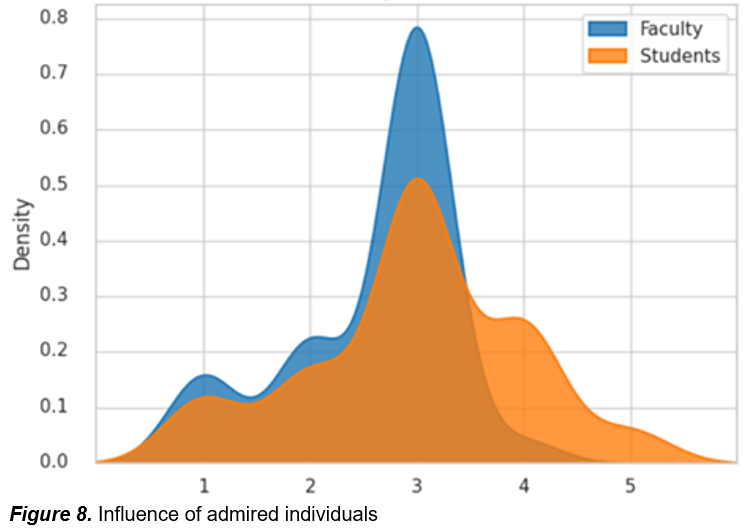

Moreover, students reported higher perceptions of institutional support (see figure 7) (p = 0.0125) and were more influenced by admired individuals (p = 0.0032), suggesting that both formal and informal cues play a stronger role in shaping their attitudes (see figures 7 & 8). In contrast, faculty appear to rely more on personal judgment and professional norms, which may explain their more cautious stance.

Finally, although several items did not show statistically significant differences, they still offer relevant insights. Both groups expressed moderate agreement regarding the ethical use of Gen-AI (professors: 3.525; students: 3.593) and concerns about academic integrity (professors: 3.508; students: 3.387), indicating a shared awareness of potential risks. Similarly, both faculty and students showed strong interest in learning more about Gen-AI (professors: 4.049; students: 3.967), while their willingness to pay for such tools remained low (professors: 2.328; students: 2.207)—suggesting that, despite high interest, concerns about value or accessibility persist. No significant differences were observed in perceptions of Gen-AI’s impact on academic performance (professors: 3.262; students: 3.347), technical problem-solving (professors: 3.262; students: 3.407), or future readiness (professors: 3.377; students: 3.153), although faculty reported slightly higher scores in the latter, possibly reflecting their role in institutional planning.

Discussion

The comparative analysis between faculty and students reveals a consistent divergence in their perceptions of generative AI. Students, often digital natives, display greater confidence and enthusiasm, integrating these tools into their academic routines for tasks such as summarization, idea generation, and writing support. Faculty, by contrast, adopt a more cautious stance, shaped by concerns about reliability, ethical risks, and pedagogical alignment. In practice, this means that students tend to adopt Gen-AI autonomously, while faculty require structured institutional support and clear ethical frameworks to embed these tools responsibly in teaching.

These findings both confirm and nuance previous research. Studies such as He et al. (2024) and Yilmaz et al. (2023) highlight students’ perception of usefulness and ease of use, which our results reinforce. Faculty concerns mirror those reported by Kim et al. (2025) and Batista et al. (2024), emphasizing ethical risks and the need for pedagogical adaptation. However, our study contributes a novel perspective by examining both groups within the same institutional context, revealing the coexistence of enthusiasm and skepticism in a shared environment. This contrast suggests that institutional strategies must adapt global recommendations to local dynamics, ensuring coherence between teaching practices and learning behaviours.

A critical reflection on the methodology highlights potential biases. Voluntary participation may have attracted respondents already interested in Gen-AI, and the relatively small faculty sample limits representativeness across disciplines. Institutional culture and disciplinary traditions may also have shaped responses, meaning that results are partly contingent on local conditions. Beyond statistical significance, the reported effect sizes provide practical meaning: large effects, such as productivity (d = 0.923), indicate substantial gaps with immediate implications for policy, while medium and small effects highlight areas where differences exist but may be less impactful in practice. This differentiation underscores the importance of prioritizing institutional strategies around the most consequential divergences.

The results can also be interpreted through theoretical frameworks. Within the Technology Acceptance Model, students score higher on perceived usefulness and ease of use, while faculty responses reflect stronger attitudes and ethical concerns. As Parga García (2023) points out, it is necessary to educate with AI with a critical, ethical, and humanistic vision. The Theory of Planned Behaviour explains students’ sensitivity to social influence, whereas faculty decisions appear more autonomous. Digital literacy perspectives further clarify the divide: students demonstrate functional literacy but limited critical literacy, while faculty emphasize critical evaluation. Together, these insights underline the need to foster both functional and critical AI literacy, tailoring support to the distinct roles of students and faculty.

From a practical perspective, these findings suggest that universities should invest in differentiated strategies. For faculty, training should focus not only on technical proficiency but also on pedagogical models and ethical frameworks (Sánchez-Trujillo et al., 2025). For students, embedding Gen-AI literacy into curricula (as suggested by Sánchez-Trujillo et al., 2025) and promoting critical engagement with AI-generated content are essential. Institutional policies must bridge the perceptual gap, ensuring that enthusiasm among students is matched by faculty readiness and pedagogical coherence.

Finally, future research should expand the scope by including larger and more diverse samples, conducting longitudinal studies to track evolving perceptions, and incorporating qualitative methods such as interviews or focus groups to capture the reasoning behind attitudes. Such approaches would deepen understanding of how generative AI reshapes higher education and provide more robust guidance for institutional strategies.

Conclusions

Practical implications

The comparative analysis between faculty and students at the University of Extremadura reveals a complex and nuanced landscape of perceptions regarding the integration of Gen-AI tools in higher education. These findings align with and extend recent literature that underscores both the promise and the ambivalence surrounding Gen-AI adoption in academic contexts (Yilmaz et al., 2023; Ivanov et al., 2024; Batista et al., 2024).

One of the most consistent patterns observed is the greater enthusiasm and optimism among students. They report significantly higher levels of confidence in Gen-AI outputs, greater perceived productivity gains, and a stronger willingness to use and recommend these tools. These results are consistent with recent global surveys indicating that over 80% of students are already using Gen-AI tools regularly, often without formal institutional guidance (Campbell Academic Technology Services, 2025). This widespread adoption may explain their higher comfort levels and perceived benefits, particularly in tasks such as summarization, ideation, and writing support.

In contrast, faculty responses reflect a more cautious and critical stance, particularly regarding the reliability and ethical implications of Gen-AI. Kim et al. (2025) and Fernández-Miranda et al. (2024) found that while both students and instructors are experimenting with Gen-AI tools, faculty express more concern about ethical risks and the pedagogical value of these technologies. These concerns include the erosion of critical thinking, the potential for academic dishonesty, and the lack of alignment between Gen-AI’s commercial logic and educational goals.

The statistically significant differences in perceived ease of use and ability to integrate Gen-AI further highlight a generational or experiential divide. Students, often digital natives, find these tools more intuitive and seamlessly incorporable into their workflows. Faculty, on the other hand, may face steeper learning curves or institutional constraints that hinder integration. This echoes findings from Batista et al. (2024), who emphasize the need for targeted professional development and institutional strategies that support faculty in navigating the pedagogical and ethical complexities of Gen-AI.

Beyond the statistical differences, the findings also invite reflection on the pedagogical implications of Gen-AI integration. Curriculum design and instructional strategies may need to evolve to better align with students’ growing familiarity and reliance on these tools. This could include embedding Gen-AI literacy into course objectives, promoting critical engagement with AI-generated content, and training students to use these technologies ethically and effectively.

Additionally, the observed divergences may be partially explained by generational or cultural factors. Students, often digital natives, may approach Gen-AI with greater openness and confidence, while faculty- shaped by different technological and educational paradigms- may exhibit more caution. Future research should explore how age, disciplinary background, and cultural context influence perceptions and adoption patterns, as these variables could inform more tailored and inclusive institutional strategies.

Another notable finding is the greater influence of admired individuals on students’ attitudes toward Gen-AI. This suggests that peer norms and role models play a significant role in shaping student behaviour, a dynamic well-documented in technology adoption literature. Faculty, by contrast, appear to rely more on professional judgment and institutional policy, reinforcing the importance of leadership and policy clarity in shaping faculty engagement.

The perceived lack of institutional support, particularly among faculty, raises important questions about how universities are communicating and operationalizing their AI strategies. While students may interpret access to tools as implicit support, faculty may expect more structured guidance, training, and ethical frameworks. This disconnect underscores the need for clear, inclusive, and role-sensitive institutional policies that address the distinct needs and expectations of both groups.

From a managerial perspective, these findings offer several actionable insights. First, institutions must address the perceptual and experiential gap between students and faculty. While students exhibit high levels of enthusiasm, confidence, and perceived utility regarding Gen-AI tools, faculty responses are more cautious and reflect concerns about reliability, ethical risks, and pedagogical alignment. This divergence suggests that institutions must invest in tailored training and support structures for faculty, not only focusing on technical proficiency but also on pedagogical strategies and ethical frameworks that enable meaningful and responsible integration of Gen-AI into teaching practices.

Second, the high levels of student engagement with Gen-AI present an opportunity to promote critical and reflective use of these tools. Institutions should consider embedding Gen-AI literacy into the curriculum, encouraging students to evaluate AI-generated content thoughtfully and ethically. Leveraging student enthusiasm through peer-led initiatives or ambassador programs could further enhance responsible adoption.

Third, the strategic role of leadership and role modelling cannot be overstated. Faculty champions and institutional leaders who demonstrate thoughtful and effective uses of Gen-AI can help shape a culture of responsible innovation. Their visibility and example can bridge the gap between policy and practice, fostering trust and engagement across the academic community.

Finally, universities must articulate their stance on Gen-AI use, define acceptable practices, and provide accessible resources that support both innovation and academic integrity. These policies should be co-developed with input from diverse academic stakeholders to ensure they are context-sensitive and widely accepted. As recent studies suggest (Kim et al., 2025), demographic and disciplinary diversity must be considered when designing these strategies, as perceptions and usage patterns vary significantly across groups.

These recommendations are directly supported by the statistical evidence reported in this study. The large effect size observed in perceptions of productivity and quality (d=0.923) underscores the urgency of embedding Gen-AI literacy into curricula, while medium effects such as willingness to use (d=0.615) highlight the need for faculty training programs. Smaller effects, including confidence in Gen-AI results (d= 0.314), suggest areas where differences are less pronounced but should be monitored over time. By linking institutional actions to the magnitude of observed effects, universities can prioritize strategies that address the most consequential gaps while remaining attentive to emerging challenges.

The successful integration of Gen-AI in higher education requires more than access to technology. It demands coordinated efforts in training, policy, communication, and leadership that recognize the distinct needs and perspectives of both students and faculty, while fostering a shared vision of ethical and effective AI use in academic life.

General conclusions

This study provides a comparative perspective on how faculty and students perceive the integration of Gen-AI tools in higher education. Drawing on survey data from a Spanish university, the analysis reveals significant differences in eight key areas: confidence in Gen-AI results, ability to integrate these tools, ease of use, institutional support, influence of admired individuals, willingness to use and recommend Gen-AI, and perceived impact on productivity and quality. Students consistently report higher levels of confidence, greater perceived usability, and stronger willingness to adopt and advocate for Gen-AI, while faculty, although generally open to innovation, express more caution, particularly regarding reliability and ethical implications.

These findings underscore the importance of role-specific strategies for Gen-AI integration. While students appear ready to embrace these tools, often driven by peer influence and perceived utility, faculty may require more structured support, training, and policy clarity to align Gen-AI use with pedagogical goals and academic standards. The divergence in perceptions also highlights the need for institutional coherence: successful and ethical integration of Gen-AI depends not only on technological access but also on shared understanding, mutual trust, and inclusive governance.

However, this study is not without limitations. First, the sample is limited to a single institution, which may constrain the generalizability of the findings. Cultural, disciplinary, and institutional differences across universities could yield different patterns of perception and adoption. Second, the study relies on self-reported data, which may be subject to social desirability bias or limited awareness of Gen-AI capabilities. Third, the cross-sectional design captures perceptions at a specific moment in time, without accounting for how attitudes may evolve as Gen-AI tools become more embedded in academic routines.

It is also important to acknowledge the relatively small faculty sample, which, although adequate for basic statistical tests, may limit the generalizability of the findings across all academic disciplines. This constraint, combined with the voluntary nature of participation, suggests that the results should be interpreted with caution.

These methodological constraints may have influenced the results in specific ways. The voluntary nature of participation likely attracted respondents with greater interest in Gen-AI, which could partly explain the high levels of enthusiasm reported by students. Similarly, the relatively small faculty sample may have amplified the cautious stance observed, as certain disciplines or perspectives may be underrepresented. The single-institution context also means that perceptions are shaped by local policies, resources, and cultural factors, which may not reflect broader academic environments. For these reasons, the findings should be interpreted as indicative rather than universally generalizable, and institutional strategies derived from them must be adapted carefully to other contexts.

Future research should build directly on the divergences observed in this study. The strong enthusiasm among students calls for longitudinal studies that track whether early adoption translates into sustained academic benefits or risks of overreliance. The cautious stance of faculty highlights the need for qualitative approaches—such as interviews or focus groups—to explore the underlying pedagogical and ethical concerns that survey data cannot fully capture. The influence of admired individuals on students’ attitudes suggests examining the role of peer norms and role models in shaping responsible AI use, while the perceived lack of institutional support points to comparative studies across universities to identify how different policy frameworks affect adoption. Finally, expanding the scope to include diverse disciplines and international contexts would clarify whether the generational and cultural factors identified here are universal or context-specific.

Bibliographic references

Abbas, M., Khan, T. I., & Jam, F. A. (2025). Avoid Excessive Usage: Examining the Motivations and Outcomes of Generative Artificial Intelligence Usage among Students. Journal of Academic Ethics, 23, 2423–2442. https://doi.org/10.1007/s10805-025-09659-3

Batista, J., Mesquita, A., & Carnaz, G. (2024). Generative AI and Higher Education: Trends, Challenges, and Future Directions from a Systematic Literature Review. Information, 15(11), 676. https://doi.org/10.3390/info15110676

Bhaskar, P., Misra, P., & Chopra, G. (2024). Shall I use ChatGPT? A study on perceived trust and perceived risk towards ChatGPT usage by teachers at higher education institutions. International Journal of Information and Learning Technology, 41(4), 428–447. https://doi.org/10.1108/IJILT-11-2023-0220

Campbell Academic Technology Services. (2025). AI in Higher Education: A Meta Summary of Recent Surveys of Students and Faculty. https://acortar.link/7LvqMD

Fernández-Miranda, M., Román-Acosta, D., Jurado-Rosas, A. A., & Limón-Dominguez, D. (2024). Artificial intelligence in Latin American universities: Emerging challenges. Computación y Sistemas, 28(2), 435–448. https://doi.org/10.13053/cys-28-2-4822

González Torres, V. H., Lucero Baldevenites, E. V., Azpilcueta Ruiz Esparza, M. de J., Bracho-Fuenmayor, P. L., & Caballero de Lamarque, C. P. (2025). Artificial intelligence in Latin American higher education: Implementations, ethical challenges, and pedagogical effectiveness. LatIA Journal, 3, 304. https://doi.org/10.62486/latia2025304

Gutiérrez-Leefmans, M. (2025). Adoption of artificial intelligence in higher education: A diffusion of innovation approach. The TQM Journal. Advance online publication. https://doi.org/10.1108/TQM-12-2024-0523

Hamamra, B., Mayaleh, A., & Khlaif, Z. N. (2024). Between tech and text: the use of generative AI in Palestinian universities - a ChatGPT case study. Cogent Education, 11(1). https://doi.org/10.1080/2331186X.2024.2380622

He, Y., Sallam, M., Elsayed, W., Al-Shorbagy, M., Barakat, M., El Khatib, S., Ghach, W., Alwan, N., Hallit, S., Malaeb, D. (2024). ChatGPT usage and attitudes are driven by perceptions of usefulness, ease of use, risks, and psycho-social impact: a study among university students in the UAE. Frontiers in Education, 9. https://doi.org/10.3389/feduc.2024.1414758

Ivanov, S., Soliman, M., Tuomi, A., Alkathiri, N. A., & Al-Alawi, A. N. (2024). Drivers of generative AI adoption in higher education through the lens of the Theory of Planned Behaviour. Technology in Society, 77, 102521. https://doi.org/10.1016/j.techsoc.2024.102521

Jingwei He, A., Zhang, Z., Anand, P., & McMinn, S. (2024). Embracing generative artificial intelligence tools in higher education: a survey study at the Hong Kong University of Science and Technology. Journal of Asian Public Policy, 18(2), 352–376. https://doi.org/10.1080/17516234.2024.2447195

Kim, J., Klopfer, M., Grohs, J. R., Eldardiry, H., Weichert, J., Cox, L. A., & Pike, D. (2025). Examining Faculty and Student Perceptions of Generative AI in University Courses. Innovative Higher Education, 50, 1281–1313. https://doi.org/10.1007/s10755-024-09774-w

Lu, H., He, L., Yu, H., Pan, T., & Fu, K. (2024). A Study on Teachers’ Willingness to Use Generative AI Technology and Its Influencing Factors: Based on an Integrated Model. Sustainability, 16, 7216. https://doi.org/10.3390/su16167216

Miranda, F. J., & Chamorro-Mera, A. (2025a). Exploring the adoption of generative artificial intelligence tools among university teachers. Higher Education Research & Development, 1–17. https://doi.org/10.1080/07294360.2025.2559648

Miranda, F. J., & Chamorro-Mera, A. (2025b). The impact of gender and age on HEI teachers’ intentions to use generative artificial intelligence tools. Information Technologies and Learning Tools, 108(4), 112-128. https://doi.org/10.33407/itlt.v108i4.6046

Parga García, R.A. (2023). La inteligencia artificial en el sistema educativo venezolano: oportunidades y amenazas. Revista Eduweb, 17(4), 9-15. https://doi.org/10.46502/issn.1856-7576/2023.17.04.1

Ríos-Hernández, I. N., Mateus, J.-C., Rivera-Rogel, D., & Ávila-Meléndez, L. R. (2024). Perceptions of Latin American students on the use of artificial intelligence in higher education. Austral Comunicación, 13(1), e01302. https://doi.org/10.26422/aucom.2024.1301.rio

Salas-Pilco, S. Z., & Yang, Y. (2022). Artificial intelligence applications in Latin American higher education: A systematic review. International Journal of Educational Technology in Higher Education, 19(21). https://doi.org/10.1186/s41239-022-00326-w

Sánchez-Trujillo, M. de los A., Bernabé-Sánchez, E., & Sáenz-Egúsquiza, F.D. (2025). Inteligencia artificial y formación docente: perspectivas de estudiantes de educación. Revista Eduweb,19(3), 22-34. https://doi.org/10.46502/issn.1856-7576/2025.19.03.2

Shahzad, M. F., Xu, S., & Javed, I. (2024). ChatGPT awareness, acceptance, and adoption in higher education: the role of trust as a cornerstone. International Journal of Educational Technology in Higher Education, 21, 46. https://doi.org/10.1186/s41239-024-00478-x

Shevel, B., Palaguta, I., Marushko, L., Barkasi, V., & Zhang, G. (2025). Interactive learning using chatbots in higher education. Revista Eduweb, 19(1), 134–152. https://doi.org/10.46502/issn.1856-7576/2025.19.01.9

Soliman, M., Ali, R. A., Khalid, J., Mahmud, I., & Ali, W. B. (2024). Modelling continuous intention to use generative artificial intelligence as an educational tool among university students: findings from PLS-SEM and ANN. Journal of Computers in Education, 12, 897-928. https://doi.org/10.1007/s40692-024-00333-y

Strzelecki, A., Cicha, K., Rizun, M., & Rutecka, P. (2024). Acceptance and use of ChatGPT in the academic community. Education and Information Technologies, 29, 22943–22968. https://doi.org/10.1007/s10639-024-12765-1

Yilmaz, H., Maxutov, S., Baitekov, A., & Balta, N. (2023). Student Attitudes towards Chat GPT: A Technology Acceptance Model Survey. International Educational Review, 1(1), 57–83. https://doi.org/10.58693/ier.114

Yu, H., & Guo, Y. (2023). Generative artificial intelligence empowers educational reform: current status, issues, and prospects. Frontiers in Education, 8, 1183162. https://doi.org/10.3389/feduc.2023.1183162

Este artículo no presenta ningún conflicto de intereses. Este artículo está bajo la licencia Creative Commons Atribución 4.0 Internacional (CC BY 4.0). Se permite la reproducción, distribución y comunicación pública de la obra, así como la creación de obras derivadas, siempre que se cite la fuente original.